Why technical interviews fail in engineering hiring: reasons and common patterns

In engineering hiring, technical interviews are the key screening step. Yet mismatches are common after joining—code reviews fail, or candidates cannot handle design work. The main causes are unclear evaluation criteria and too much reliance on potential-based judgment. This article breaks down why technical interviews fail and explains practical evaluation design and decision criteria to improve screening accuracy.

Contents

Why technical interviews fail at some companies

In engineer hiring, companies with ineffective technical interviews often share the same false assumption.

They confuse how to assess "potential" and "technical skill."

Misunderstanding Potential Assessment

In Japan, many firms extend new-graduate, potential-first hiring to mid-career engineer hiring, but for technical roles this is a critical mistake.

Engineer output depends on reproducible skills and design ability, so if evaluation is based on future growth instead of "what they can do now," post-hire quality cannot be ensured.

In practice, some can explain algorithms but produce many bugs in implementation and get repeatedly sent back in reviews, increasing team workload.

Lack of Clear Technical Criteria

Another major issue is that technical evaluation is not clearly defined.

Many companies use vague impressions like "seems capable" or "learns fast," but this leads to interviewer-by-interviewer differences and no hiring consistency.

Also, if criteria stay unclear, the passing level becomes ambiguous, resulting in teams with wide skill gaps.

Then every hire becomes hit-or-miss, and the organization cannot stabilize its technical strength.

Causes of lower accuracy

Technical interviews fail because the evaluation design itself is flawed.

A key issue many companies miss is separating the structure of criteria from how they are run.

Vague evaluation criteria

The biggest cause is that interviews are done with unclear criteria.

For example, even if you say "assess design skill," if what counts as design skill is not broken down, each interviewer interprets it differently, and ratings are inconsistent.

As a result, one interviewer may value class design, while another values performance optimization knowledge, so criteria scatter and hiring standards effectively disappear.

In this state, even candidates at the same level get different ratings, so screening accuracy inevitably drops.

Interviewer-dependent structure

Another problem is that evaluation depends on each interviewer’s personal ability.

If a highly skilled engineer interviews, their judgment can keep some accuracy, but it does not scale to others, and organizational repeatability is lost.

Also, because questions and follow-up depth differ by interviewer, fairness issues arise as difficulty changes by candidate.

As a result, the whole process becomes a black box, leading to "we cannot explain why we hired this person."

If you cannot identify where ratings diverge, you need to break down the full hiring process and redesign each interview’s role and criteria.

On-site failure patterns

Vague criteria and interviewer-dependent hiring often surface as concrete issues after onboarding.

Here are common failure patterns seen in practice.

Reviews Don't Pass

When technical screening misses problems, code review is usually the first place they appear.

At first things may look fine, but once real tasks start, issues pile up—poor grasp of design assumptions, missing exception handling, and repeated review rejections.

For example, when assigned an API design task, they may miss the intent and create an overly complex design, leading to repeated fix requests.

This doubles reviewer workload and slows the whole team. Only then does it become clear they are not yet at a practical level—something hiring failed to detect.

Early Turnover from Expectation Gaps

Another common failure is early resignation caused by mismatched expectations.

If skills and role are not aligned in interviews, a gap appears between assigned work and the candidate’s understanding after joining.

For example, someone hired for a role involving design may end up doing mostly implementation tasks, decide there is no growth opportunity, and restart job searching within three months.

This is especially common with global talent, who often prioritize market value; gaps between promise and reality quickly lead to turnover.

Such mismatches are not post-hire issues; they should be prevented at the technical interview design stage.

When evaluation and role definition are not integrated, the same failures are likely to repeat.

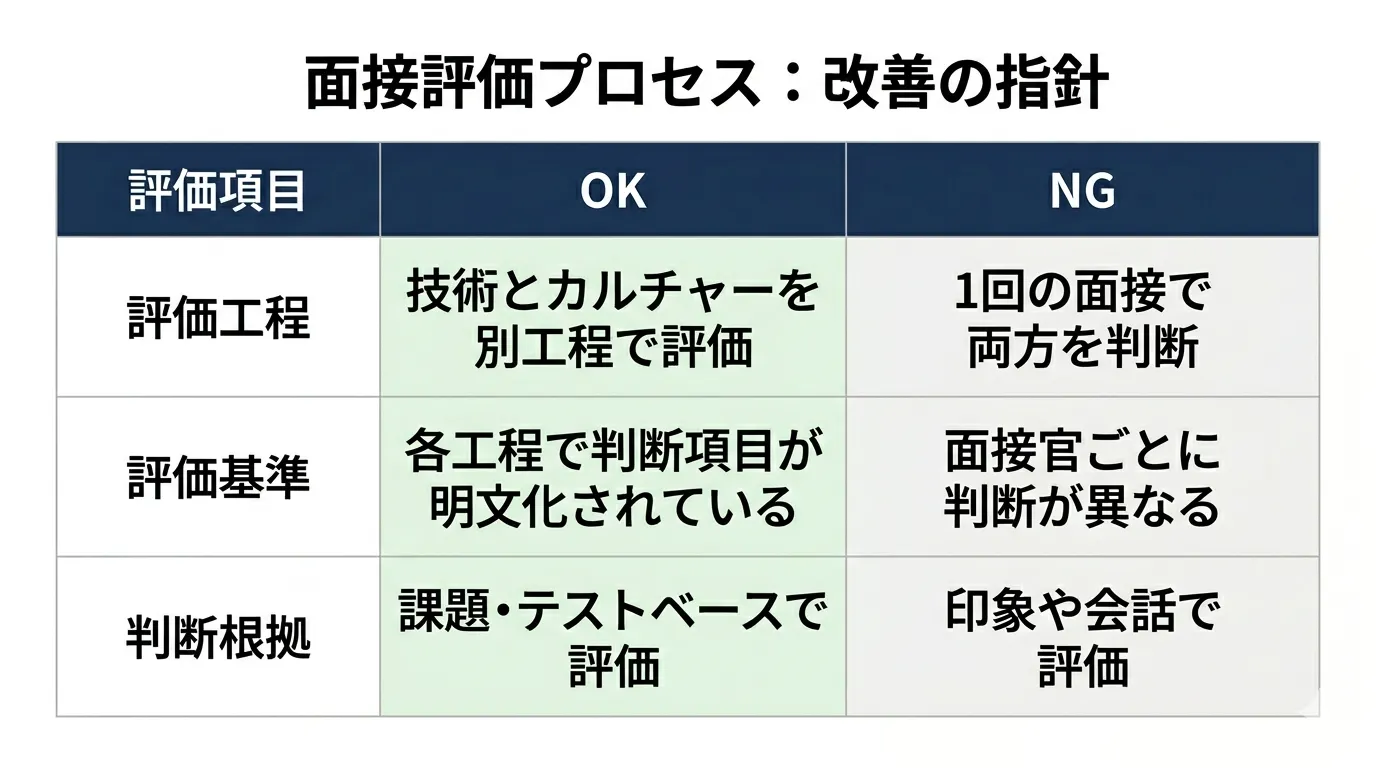

Separate technical and cultural evaluation

A key to improving technical interview accuracy is separating what you evaluate.

Many companies judge technical skill and culture fit in one interview, which lowers screening accuracy.

Risks of mixed evaluation

When technical and culture assessments are mixed, candidates who should fail are more likely to pass.

A typical case is when a smooth, likable communicator has technical weaknesses overlooked.

On the other hand, candidates with strong technical skills may be scored lower based only on speaking style or response tone, causing teams to miss needed talent.

When criteria are mixed, standards become unclear and hiring consistency is lost.

How to split the evaluation process

To solve this, clearly separate the evaluation steps.

Specifically, assess technical skills through coding tests or design tasks, and evaluate culture fit in a separate interview.

Also, document criteria for each step and fix "what is judged in which interview" to reduce interviewer variation.

Only with this separation can you accurately assess both technical ability and organizational fit.

Domestic vs overseas hiring evaluation design

Technical interviews differ greatly between domestic and global hiring.

Using the same criteria without understanding this gap sharply reduces assessment accuracy.

Limits of the Japanese Evaluation Style

In Japan, engineer hiring often emphasizes potential and culture fit, and many decisions are based more on "growth room" than absolute skill level.

This works in organizations built around training, but not in teams that need immediate contributors.

Especially in mid-career hiring, output is expected right after joining, so potential-based hiring can heavily increase team burden.

If this structure is ignored, skill gaps surface after hiring and overall team productivity drops.

Designing Evaluation for Global Talent

By contrast, hiring global talent—especially Indian engineers—requires evaluation centered on skill consistency and problem-solving ability.

In India’s engineer market, Tier 1 graduates often have strong algorithms and design skills, while Tier 2/3 talent varies widely, so screening accuracy strongly affects hiring results.

Therefore, it is essential to clearly define which university tiers to target and what level is the pass line, then assess with task-based methods.

Competition on pay and growth opportunities is also intense in global markets, and companies with vague standards are less likely to be chosen, so evaluation design directly drives hiring competitiveness.

Technical interviews designed as an extension of domestic hiring cannot adapt to this market, making hiring even harder.

Related articles

The recruitment of new graduates in Japan and India fundamentally differs in timing, selection criteria, and career perspectives. This analysis explains, from a professional viewpoint, why Japan's 'potential hiring' doesn't work, and delves into the reality and structural differences of the placement system at Indian institutes of technology.

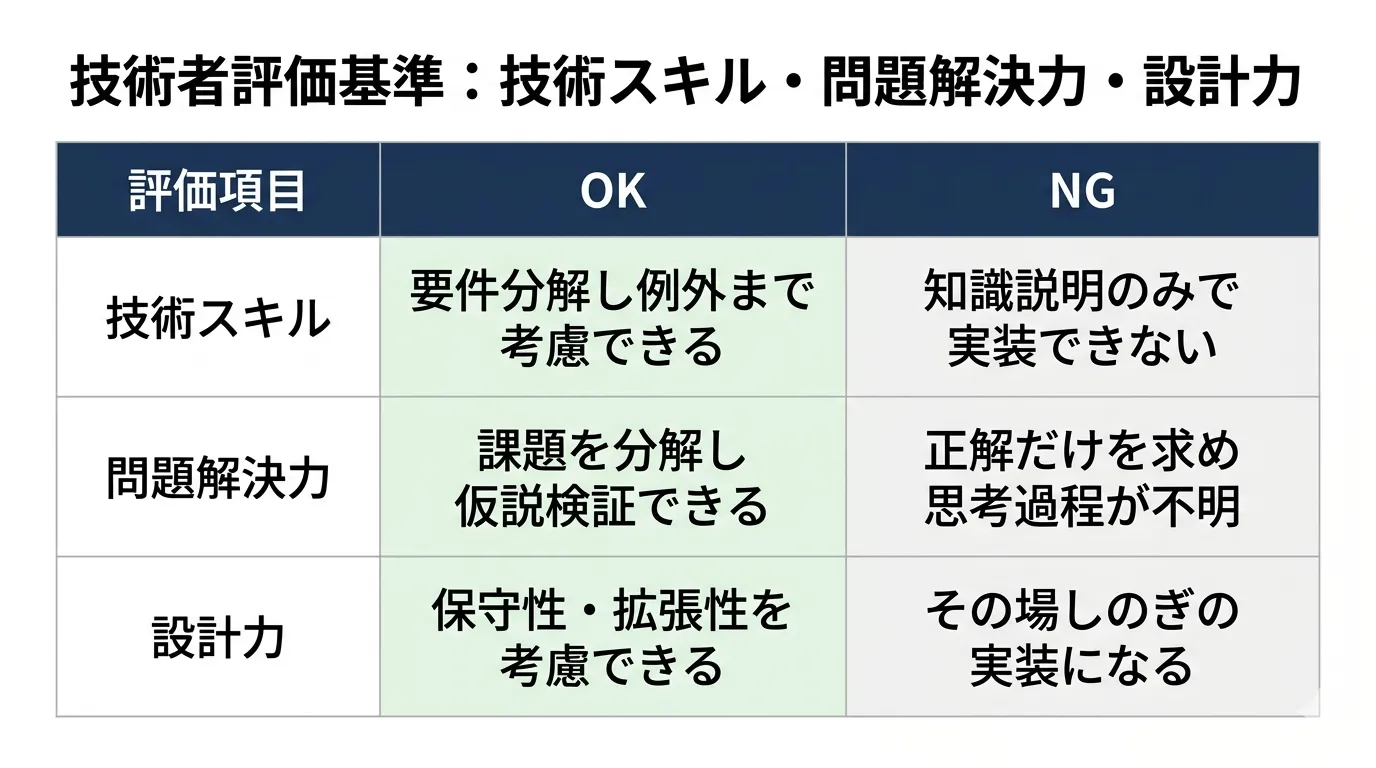

Practical technical interview criteria

Given these issues, improving technical interviews comes down to designing evaluation criteria.

The key is not abstract skill ratings, but clear standards tied to real work.

OK/NG Criteria for Skill Evaluation

First, break technical skills down into concrete behaviors.

For example, instead of saying "has coding ability," assess whether the person can break down requirements and write code that handles edge cases to reduce rating variance.

It is also important to evaluate not only algorithm knowledge but also design and maintainability needed in actual work.

If knowledge and implementation are disconnected, they will not perform on the job, so decisions must be output-based.

How to Assess Problem-Solving Ability

Another critical point is evaluating problem-solving ability.

This is not just about getting the right answer, but about checking the thinking process: how they break down issues and in what order they solve them.

For example, by presenting a task with ambiguous specs and observing how they confirm assumptions and form hypotheses, you can judge practical responsiveness.

If this process is not evaluated, the risk rises of hiring people with surface knowledge who cannot handle real problems.

In any case, technical interview accuracy will not improve unless evaluation criteria are specified to this level.

Summary

Failures in technical interviews for engineer hiring are not just interview-method issues; they are a structural problem of poor evaluation design.

Hiring with vague criteria directly hurts productivity through heavier post-hire review load and early turnover.

What matters is evaluating "repeatable skills," not potential, and separating technical and culture assessment with clear definitions of what each stage decides.

Also, since evaluation difficulty differs between Tier1 and Tier2+ talent, building screening accuracy into the design greatly affects hiring success.

However, building this evaluation design only in-house often causes interviewer variance and person-dependent standards, making consistency hard to ensure.

This is even harder for global hiring, where salary levels, candidate preferences, and VISA support all interact.

Phinx, led by members with engineer hiring and org-building experience in global companies like Rakuten and Mercari, uses an Indian university network from Tier1 to Tier3 to support end-to-end: technical screening, VISA/COE handling, and onboarding.

Not just referrals, we support the design of evaluation criteria itself to ensure hiring consistency and accuracy.

If you are continuing interviews with unclear criteria, or lack confidence in candidate judgment, you need to review the entire hiring process structurally.

If you are unsure about the design, organizing it with external expertise is also an option.